I recently saw an active discussion in the Localization Professionals group in LinkedIn. The key point raised initially was that lower prices cause lower quality and thus begins a discussion on who is to blame. (There has to be someone / something to blame, right?) Since people’s livelihoods and bonuses are at stake, the discussion can quickly become emotional. The culprits according to the discussion are bad localization managers, freelancers who accept lower rates, lack of standards, lack of differentiation, lack of process, the internet, marketplaces becoming more efficient etc.. In some way all these reasons are valid, but is there something else going on?

I would like to present another perspective on this issue that points to larger forces that are driving structural changes and are also driving prices down and putting pressure on the old order. These forces exist independently of what we may feel about them, they simply are an observable fact of the translation landscape today.

The Increasing Volume of Content

Most global enterprises today, are facing huge increases in content volumes that increasingly demands to be translated. However, they are usually not given increased translation budgets to match this increase in content volume. So they have a few options to deal with this situation:

1) Refuse to translate the content that is out of budget range

2) Reduce prices to current translation suppliers (but increase volume)

3) Look for ways to increase translator productivity (MT, CAT Tools, Crowdsourcing) which can enable them to get more done with the same money i.e. raise individual translator productivity from 2,500 words/day to something much higher (10,000+?).

Thus, one of the driving forces behind lower prices is the need to make more and more content available for the global end-customer. The web and the growth in demand for dynamic content affects all kinds of global businesses. We can expect this content growth to only increase in future and the demands for productivity will get louder. This does not mean that rates will automatically go lower but we are seeing that it does have an impact and that this content growth is also possibly driving interest in MT. How else do you cope with 10X to 100X the content volume?

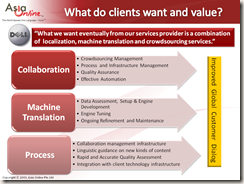

The Value of Localization Content

Another dimension that should also be examined is the value of what we translate. Most of the professional translation industry is focused on SDL = Software & Documentation Localization and a relatively small and static part of corporate websites. However, it is increasingly being understood that while this “traditional localization content” is necessary, and will continue to be so, it is not where the greatest value lies for a global enterprise trying to build global customer loyalty. Very few people read manuals, in fact, some say that translators are often the only people who actually read manuals, especially in IT and consumer electronics industries. If users do not value this documentation we will continue to see price and efficiency pressures for this kind of translation. Customer and community generated content is increasingly more important, especially for complex IT and Industrial Engineering products. So we have major IT companies like Microsoft, Dell and Symantec who find that the localization content they are translating and producing only services 2% of their real customer inquiries. The customer need and expectation for having a large amount of searchable content continues to grow, and given the velocity of information creation, translation automation and MT become necessary. This is another source of downward pressure on prices, though we also see higher volumes and new attitudes and definitions of translation quality. Global enterprises realize that new quality standards are required for this massive content and new production models and approaches are sought as standard TEP costs and process are not viable to meet these needs.

The Global Demand for Translation & Knowledge

The third dimension is the raw demand for knowledge, information and collaboration from the world beyond the professional translation world. The thirst for knowledge across the globe is driving new collaboration models like crowdsourcing and development of open source software/tools, Open Data initiatives like the Meedan, EC, Opus, World Bank continue to build momentum. It is not clear that costly, membership-only initiatives like TAUS, will prosper unless they do in fact provide higher quality data and add some real value. We should expect to see the growing use of amateurs as we see at Yeeyan and TED. We also see the growth of fan translations that prove that fans can create viable long-term initiatives, and again this shows that it is possible to reduce translation costs when motivated communities can be built. Adobe recently decided to use the crowd and a technology platform to manage the crowd to further their core business mission of developing international market revenue. And guess what one of the outcomes of this is? Lower translation costs.

So the poor translator and most LSPs are caught in these major shifts which are clearly out of the control of any single player in the localization world. We all (Buyer, LSPs and Translators) have to learn how to deal with these forces as they affect us all. In future, we should expect that ongoing productivity improvements will matter, and skill and experience with translation automation will be increasingly valued.

I think skill with SMT (I am biased) will especially matter, as it is a technology that brings together TM, crowdsourcing, project management expertise, linguistic competence and collaboration infrastructure in way that can demonstrate and deliver higher productivity, lower costs per word and yet maintain quality. Traditional localization work will likely be under increasing price pressure because of it’s low relative value, and we will begin to see global enterprises that seek to do 10X to 100X the volume of current translations to be done. The price drops perhaps also signal that we are seeing the collapse of the old business model of static repetitive content done in a TEP cycle. While this is painful for all concerned, it is also a time where some will learn how to bring the technology, people and processes together to be able to deliver on new market demands. Recent announcements from Lionbridge and others show that change is necessary but making it mandatory and punitive is to my mind clumsy and ill-advised. (This old command and control mentality is really hard to subdue). I suspect that change initiatives that do not create clear win-win scenarios for all the parties concerned will struggle and face growing resistance.

I think that initiatives that marginalize, exploit or strong arm translators, are doomed to fail. Willing, motivated and competent translators have been the key to delivering quality in the past and will be even more so in future. Disruptive change is not all it is cracked up to be. Even Google and Microsoft found that revolution consisted of a continuing sequence of small changes as they built their empires, and I suspect that, that approach will also work best here. It would not surprise me to find new leadership emerge from outside the industry, for these are surely interesting times, and openness and real collaboration are hard to find in the professional translation world.

Innovation & Collaboration

So how do we respond to these forces? What is an intelligent response to these kinds of macro changes?

I don't have many answers but list some possibilities below, and I do understand that this is very easily said and not so easily done:

-- Find out how to increase productivity while maintaining or even raising quality (Better, more efficient automation processes and effective use of MT especially data-driven SMT that learns and improves.)

-- Work together with global enterprises and find out what the highest value content is. Then learn how to make it multilingual efficiently and effectively as a long-term partnership mission. ( I doubt that this content is software and document localization or a few select pages on the corporate website)

-- Develop better, collaborative relationships with customers (the translation buyer) and the translators who are the keys to quality to build long-term leverage and benefit or all the parties in the game.

-- Develop higher value add services like transcreation (is there really no better word) in addition to basic translation services

-- Develop new business models and new ways to get high-value content done where there is a much tighter and longer term commitment from buyers, vendors and a team of translators i.e. a common and shared mission orientation rather than single project management and orientation.

This is a time of big structural change and it will require innovation and collaboration on a new scale. The question that I have been asking and I think the market is also asking is:

How do we reduce costs, maintain quality and increase productivity and speed up the translation process?

I know this is somewhat vague, but I think it should start with a new way to look at the problem. If we look at this as an opportunity or challenge rather than as threats that we bash and attack, we might find some constructive and useful answers. Today, I see overwhelmingly negative feedback on MT, crowdsourcing, open source and even collaboration initiatives from many in the professional translation industry in social forums. Very few trying to understand these forces better. People often go through a sequential emotional cycle of attack, fear, despair before they learn to cope, and eventually even thrive when facing disruptive change. Those who get get stuck at fear and despair, often end up as victims. It is said that those who ignore the lessons of history are doomed to repeat them. Maybe, we should be talking much more about the Luddites and the “luddite fallacy” at all the conferences we hold. If the Luddite fallacy were true we would all be out of work because productivity has been increasing for two centuries. Perhaps the biggest change we need to deal with these forces is to adopt a new, dispassionate and open view. A new view is Step 1 in developing new strategies and new approaches to managing these forces, and technology is only part of the solution. I predict we will only see real success, where technology, process and collaboration come together.